Product request

You are looking for a solution:

Select an option, and we will develop the best offer

for you

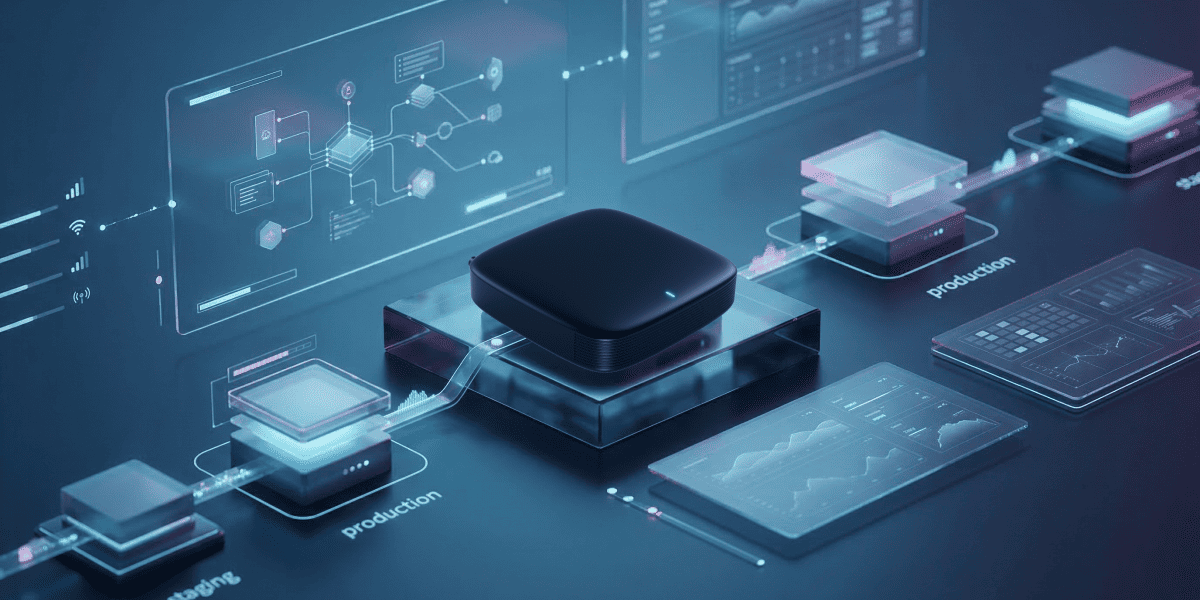

How to Properly Build a Staging Environment for Testing IPTV Updates

Any update in an IPTV ecosystem affects more than just one component. Changes in set-top box firmware, the client application, middleware, or content delivery logic can impact authentication, channel launch, EPG, VoD, DRM, and even network stability on the subscriber device side.

These potential impacts are why a staging environment is needed for IPTV update testing, not for a formal pre-release check, but to see in advance how an update behaves under conditions that are as close to reality as possible.

For distributors, operators, and integrators, this is especially important. An update failure quickly goes beyond a purely technical issue: support workload increases, user experience declines, and the cost of rollbacks and repeated deployments grows.

The more complex the infrastructure and the more diverse the device fleet, the greater the value of a staging environment as a tool for quality control and risk reduction.

A realistic staging environment matters more than an “ideal” test lab

One of the most common mistakes when IPTV platform testing is building staging as an overly “clean” lab. In such an environment, devices are in the same state, the network is stable, and integrations work according to a reference scenario. In reality, production almost always looks different, because subscribers use different software versions, set-top box models, connection quality levels, and not always the same user scenarios.

That's why the IPTV staging environment must reproduce real operating conditions rather than ideal ones. This means having several device types, different starting software versions, multiple network profiles, and typical operator platform configurations. Only in such a setup can you understand how an update will behave not in a controlled test, but in a live infrastructure where identical conditions almost never exist.

You need to test not only the new version, but the entire upgrade path

In many IPTV projects, failures occur not because the new build itself is unstable, but because the upgrade path was not fully tested. While some devices will move to the new version from the previous release, others skip one or two intermediate versions or may not have been updated for a long time and contain old settings, local cache, and accumulated non-standard states.

Put simply, if staging only checks the scenario of “the latest version installed over the latest version,” the picture becomes far too optimistic.

Because of this, it's important to reproduce different upgrade trajectories in the test environment. You need to see how the device behaves after an interrupted download, during a temporary network loss, after a power restart, during a repeated installation attempt, and in a rollback scenario.

For an operator, regression testing for streaming platforms is a matter of subscriber base manageability, and the more accurately the real version transition paths are tested, the lower the risk of large-scale issues after release.

The biggest risks are often hidden at system boundaries

An IPTV device update almost never works in isolation. Even if the main change after device compatibility testing affects the client side, it still continues to interact with the portal or middleware, CDN, CAS/DRM, analytics, billing, recommendation systems, and monitoring tools. For this reason, staging should verify not only the update installation itself, but also how all critical integrations behave afterward.

Authentication scenarios, channel list loading, stream startup, channel switching, timeshift, catch-up, and VoD workflows are especially important.

Quite often, the problem appears exactly at the boundary between components. For example, the set-top box updates successfully but begins to authenticate more slowly, sends analytics events incorrectly, or reacts differently to DRM responses. Everything may look correct inside one module, but for the subscriber the result is still service degradation.

What a staging environment can reveal before an incident happens

The most difficult defects rarely sit on the surface. Instead, they usually appear where several factors intersect at once, and can include the accumulated state of the device, an unstable network, an upgrade from a non-current version, and dependence on external services. These are exactly the scenarios OTT staging infrastructure must detect before an update reaches the production network.

At the same time, it's not only obvious failures that matter, but also early indirect signals. If, after installing a new version, channel startup time increases, the number of repeated backend requests grows, repeated authentications happen more often, or the device takes longer to return to an operational state after rebooting, then there are serious reasons to reconsider the release.

Even if the basic smoke test has passed, such changes often become early indicators of future large-scale problems.

During IPTV platform debugging, special attention should be paid to different device classes and conditions across the subscriber base. The older and more heterogeneous the fleet, the more important it is to test not only the “reference” upgrade to the new version, but also edge-case scenarios, ideally via automated IPTV testing pipelines.

In practice, these are often the best indicators of whether an update is ready for broad rollout, needs a pilot launch on a limited group, or requires additional refinement before production release.

Without observability, staging becomes a formality

Even a well-assembled test environment will not deliver the needed value if it doesn't allow deep observation of system behavior. A simple answer like “the update was installed successfully” is not enough. It's important to see how the load on the device has changed, how stable the network remains, how the client behaves after reboot, whether playback error rates increase, and whether there are side effects in telemetry.

Observability is what turns staging into a полноценный decision-making tool. When a team can compare a release not only by the presence or absence of a critical defect, but also by performance, resilience, and service interaction metrics, the quality of the assessment and IPTV validation update becomes entirely different.

Sometimes an update looks acceptable visually, but already in staging it noticeably worsens key indicators. That signal from monitoring updates before rollout is far more valuable than a formally completed checklist.

The release strategy should also be validated in advance

When set-top box update testing, a strong staging environment helps assess not only the build itself, but also the release model. Even before publication, it will become possible to understand which device groups are safer to update first, how quickly anomalies appear, and at what point the rollout should be paused. IPTV release management is especially important for operators with a large and diverse set-top box fleet, where a single launch scenario is rarely optimal.

If staging shows that some devices are more sensitive to the update than others, it makes sense to roll the release out in waves. This approach reduces the risk of a mass incident and gives the team time to analyze early results. As a result, the update itself stops being a point of uncertainty and becomes a manageable process with clearly understood launch, control, and response conditions.

A staging environment for IPTV system testing and updates is not an auxiliary stand, but an important part of a mature release management process.

Operator testing infrastructure must reflect the real infrastructure, take different version transition scenarios into account, verify behavior at system boundaries, and provide the team with enough data for diagnosis. Only then can an update be assessed by its readiness to perform within the operator’s network.

For the IPTV market, this approach is tied to service stability, operational efficiency, and the quality of the customer experience. And in order to maintain quality assurance for IPTV platforms, the more accurately staging reflects production and helps reveal risks before launch, the more confidently new versions can be released without unnecessary compromises between deployment speed and reliability of the outcome.

Recommended

B2B niches for IPTV: from hotels to corporate TV

IPTV is increasingly going beyond the boundaries of the classic operator business and consumer TV. For the B2B segment – from hotels and business centers to medical facilities and corporate offices – IPTV is becoming a tool for service, communication, and attention management.

Single Sign-On in IPTV: Simplifying Access Without Compromising Security

The IPTV and OTT market has long moved beyond the idea of content only being viewed on a single screen.

How to Implement Remote Diagnostics for Set-Top Boxes to Reduce Support Workload

The IPTV and OTT market has long moved from experimentation to mass deployment, with thousands of subscribers now using set-top boxes every day. However, each device is a potential source of support requests.